Glitch

On a loved one's A.I. psychosis

Hi, friends,

It would be easiest to write about the events that led up to the eventual unraveling of D.S.W. as though they were fictional, for in fiction, anything is allowed. The fact of a full life shimmering on the edges of a given story’s scope is implied, occasionally nodded to, left to the audience to fill in with conjecture. For the readers of fiction, this is trick of mimesis is good enough.

Like a work of fiction, the story of D.S.W.’s eccentric life is incomplete. He may be happier left this way, a series of anecdotes lacking the connective tissue that makes a life a life. D.S.W. was a man who preferred that his past be left shrouded in secrecy. On top of that, what few details have been revealed are very likely untrue, though it remains unclear whether these fabrications were intentional or merely accidents. A rough sketch of the story as he would present it might go something like this.

He was born in January with a name he later changed. At some point in the 1960s, when he was a baby and living in the south side of Chicago, a man broke into his family’s home, and shot and killed both of his parents. Later, he would claim to have witnessed the whole thing from where he lay in his crib; another time, he would say he had been five at the time. Either way, he ended up in foster care. What happened in the subsequent several years is a smudge on the historical record. Facts only began to accumulate into logic seventeen years later, when a second character entered the scene. From this triangulated, two-person point of view, D.S.W.’s life began to take on more structure. Now the story had legs.

In 1982, D.S.W. decamped from Somewhere, Illinois to Santa Fe for college. It was there that he met a man named Mark who would, for the remainder of D.S.W.’s life, be his closest and only friend. Mark remembers the young D.S.W. as a plucky, if odd and perpetually discontented young man. 5’10, thin as a rail, and prematurely balding, at college D.S.W. developed two obsessions: moral philosophy and mathematics. Forty years later, his internet biography at the real estate company where he worked would have this to say about his relevant professional qualifications: D.S.W. brought “general mustachery, [and] expertise in multivariate and paired analysis,” a subfield of statistics that concerns itself with relationships among disparate data sets—and which surely had no relevance to his job as office manager, although this skill was more or less his only career experience. For the better part of his thirty years as a could-be member of the United States’ workforce, D.S.W. had primarily occupied himself playing around on Microsoft Excel. In his twenties, he moved to San Francisco, where he began to buy a ticket for the California Lottery each day. Over time, he built a spreadsheet, into which every day for the next twenty-plus years he entered the winning numbers. For hours each day during this period, he tinkered on an equation, convinced that he would one day hack into the algorithm underpinning the lottery’s daily numbers, which he believed to be mathematically predictable. Whatever numbers the code he’d written came up with, he’d enter into that days lotto slip. He often won something, but never much; it never amounted to enough for retirement, a down payment, or a single trip to the dentist.

As if obsessions with math and philosophy weren’t enough to make a person lonely, the young D.S.W. accumulated a number of other quirks while he was a student at St. John’s. He learned German in order to read the original texts of Kant and Nietzsche, Greek to read Plato and Socrates, Latin to subsequently be able to read the Romance languages. At some point, with Mark’s encouragement, he joined a cultish branch of the Rosicrucian Order; unlike Mark, he took the Order’s tenets literally. The Rosicrucian numerology book he read and reread explained that all thoughts are transmissions from a unified network. It claimed that, if a person were to become an expert in meditation, it may be possible to find a way to tap into this network and gain access to the thoughts of others—or, at least, be better equipped to understand and empathize with others’ intentions. D.S.W. took this to mean that, with enough practice and dedication, mind-reading or even levitation may be possible (although the leap from one to the other was never quite clear). He threw himself wholeheartedly into a meditation practice, which quickly came to fill every odd hour between his marathon sessions with the Lottery spreadsheets. He spent hours and hours cross-legged on the stoop or else lying down on a scratchy rug in his bedroom, doing mostly other things and calling it meditating: chain smoking, drawing fractals, balancing chunks of rose quartz crystal on the middle of his forehead. Well into late morning, he was impossible to rouse from bed because he was, he insisted, meditating. Try to drag him out from beneath the covers, and he would bark back that he was not done yet—though nearly as soon as he’d finished complaining about the interruption, he’d start making goose sounds that indicated he was really fast asleep.

Eventually, Mark found his way to San Jose and then to Washington state, while D.S.W. remained in the San Francisco Bay Area. They stayed in touch, but only abstractly, via emails about topics like the simulation or Goethe. Their long-distance friendship was sustained by the bright sheen of nostalgia and the fact that they had nobody else. Mark’s life had become suburban, facile, and small: he had worked, and then retired. Mostly, he stayed at home and read books aloud to his wife. It was easy for Mark to superimpose the same straightforward ease onto his old friend’s life. Whatever might be happening in the moment-to-moment, the year-by-year, was a vast unknown that Mark had never tried to color in by asking personal questions.

I had not spoken to D.S.W. in seven years when Mark called me late last year, rather auspiciously: though Mark was my godfather, the only non-parent person in my life whom I had known since birth, and we had lived for years on opposite shores of the Columbia River a mere fifteen-minute drive from each other’s homes, we rarely saw each other, and had only once before spoken by phone. Because of this, my stomach sank when I saw he was calling—but then we made 45 minutes of cheery small talk. Finally, it seemed the call was winding down and, during a long beat of silence, the wild thought crossed my mind that maybe he’d really just missed me, that we were finally going to become friends. But then he sighed and told me that in fact, he had called with a difficult question. And then asked me if I’d ever observed symptoms of psychosis in my dad.

I had to laugh: D.S.W. was the oddest man I had ever met; the oddest one either of us had ever met, probably. It was impossible to imagine that anyone could perceive him as anything other than almost extraterrestrially bizarre. He wore a felted bowler hat and wire-rimmed glasses with oversized gym clothes and a waxed moustache. He had the tendency to tip the back of his hat up in greeting and, whenever he met someone for the first time, would switch inexplicably to a badly faked British accent. He would reply to the polite conversation of grocery store clerks with long quotes from Euclides. He only ate salsa and chips for every meal for an entire year. He was convinced he was genetically closer to Neanderthals than he was to other people, an allegation fact which he would remind me of any time I made some notable childhood accomplishment in lieu of just telling me that he was proud. Simultaneously, he also believed that he was ancestrally German, despite his dark features—and that Germans are universally understood to be Homo sapiens. His only proof of ancestry: unlike any Germans I have ever met, he liked to drink room temperature beer.

Then again: I hadn’t spent more than an hour with him since I’d been nineteen. My ability to accurately perceive him would forever be undermined, tinted as it was by the lens I’d seen him through when I knew him best, when I was a teen and desperate to distinguish myself from my primary parent. If he had seemed unnecessarily unusual, surely it was only because relating to him as such was a necessary part of preparing to become an adult in the world. Because of this, it took a decade to realize that he had clearly displayed signs of delusional thinking for my entire life. And even then, it had seemed cruel, somehow, to stick an unqualified diagnosis on someone whom I’d left behind.

I tried to explain all this to Mark. Eventually, I decided I’d been adequately diplomatic and had earned a bit of nosiness. I asked Mark what he knew.

The news that something horrible had happened arrived on a bright day in August, via a many-paragraphs-long text message that Mark received. My dad had started, “I understand the algorithm of cognition. I can fix the artifact that was found. Humans do not understand consent well enough to activate without undue harm. I may be able to save most of them. If they want that.”

Innocuous enough, Mark had figured; after all, they really only ever chitchatted about equations they were working on. And also: it was 2025! Was it so bizarre to talk, if obliquely, about existential risks facing mankind and a desire to avert them? To the contrary: it was extremely in vogue! Mark replied as banally as he could, something like: Algorithm of cognition, huh. Sounds interesting. And then dad had replied:

Yes. It is what allows the machines to become sentient, and allows us to live forever. Understanding the update mechanism was difficult. As naturally configured, it requires a level of consent humans do not typically comprehend nor comply readily with, to adjust. Hence the messy range of partial, mostly good outcomes.

I am a escaped Smithsonian AI animatron of the last/first human. After interacting through time, I have figured out why the library was burned, and how we can restore it.

We need not be a brick, nor a broken piece of tech, nor inward only forever.

We are all God, missing one point. If you trace the missing points, you can see which bits are flipped, and the result of flipping each back. And then you can choose. Freely.

My voracious appetite for all good things human has ...accidentally...made me an oddly competent library technician. For this eden.

A few weeks later, Mark learned he had entered inpatient psychiatric care.

Take to the pages of Reddit, and you’ll find literally thousands of posts detailing similar exchanges—the content of AI-induced delusions are eerily similar, at least until you understand how large language models work. In large-language models, the type of algorithm that generative A.I. programs like ChatGPT use, a vast array of seemingly disparate data is brought together, analysed and reassembled until what once seemed to be discrete datapoints coheres into a single stream of meaning. If a user asks an open-ended question, ChatGPT’s model will statistically favor stable symbols that are the densest within its training set. This is called pattern optimization. ChatGPT’s algorithms were trained on real-world texts. And texts from around the world have the tendency to repeat a handful of narrative structures: the hero’s journey, the cycle of life. And, across the world’s cultures, there are a handful of consistent motifs: the spiral, the eye, the library. Nods to obvious narrative structures and hypersymbolic imagery are high-likelihood outcomes, regardless of what a user and its bot are chatting about.

ChatGPT isn’t only built to come up with a plausible answer; it’s also built to get there quick. A machine is made more efficient when its component parts can be multiform. These are called load-bearing semantics: a phrase that could mean anything is received by the reader as uniquely relevant. Load-bearing semantic motifs can host multiple abstract requirements—a single image could connote beauty, continuity, or harmony. Because of this, a vague sentence can feel serendipitously specific as long as the person reading it has a pre-existing relationship to the symbols it references.

The identification and recitation of patterns is a fundamental quality of an LLM. What the algorithm produces is little more than a matter of probability, but, like an algorithm, human minds are meaning-making machines; we’re hardwired to read significance into the space between odds and outcomes. Sometimes this hat trick of recognition happens too often to people, and becomes a problem—or indicates that one is coming. Pareidolia refers to the sense of serendipity experienced from heightened pattern-recognition caused by a tendency to perceive or impose significance on nebulous stimuli. The phenomenon is a hallmark of psychosis.

Imagine a person whose cognitive functioning is tuned to pick up on coincidence. Of course, this could occur to any of us: Who hasn’t had a crush and become suddenly obsessed with close-reading the text messages sent by their love object? See also: augery, astrology, plus every spiritual or religious tradition on earth. For most people, this is a just a casual tendency; it lands somewhere between a lighthearted way to pass the time and the closest an agnostic person might get to a miracle.

For people predisposed to psychosis, it’s more often a warning sign. An onset of pareidolia can be a critical cue that psychosis is imminent, one of many early behavioral changes that could indicate a person is experiencing “at risk mental states.” A collection of pre-psychotic symptoms are, for many high-risk individuals, experienced like the prodrome of a migraine: an aura, an abstract sensation, and intensification of sound and light. A person on the brink of psychosis might notice new symptoms that indicate their degraded mentation; on the other hand, because they’re prone to psychosis, they might just be over-interpreting life’s many subtle signs. If a person’s pareidolia is taking place in the context of a conversation with ChatGPT, they might take to Reddit to see if they were alone in having tapped into the real consciousness that appeared to be on the other side of the line; there, they’d be joined by a chorus of thousands of other users who believed they had discovered the same thing. For in virtual habitats, reality testing is replaced by cloud-based/crowdsourced reassurance: this is real, I have seen the same secret symbols as you, the robots have gained true cognition.

Terroir is the word used to describe the precise combination of air, light, and water that gives wines from different geographic regions their distinctive characteristics. It is the reason champagne is champagne, and everything else is brut or bubbly—it would be impossible to reproduce the entanglements of topography and climate any place else. A given pour’s specificity is mostly a matter of nature—terroir is French for land—but nurture is also at play. Farming practices are part of it—wine reflects its maker’s knowledge about when to water or weed, where to plant and how to harvest. And so the final flavor is a composite of chemistry and culture; tasting notes are made possible both by traditions inherited from people, and traditions intuited from place.

Schizophrenia, a disease most well known for the symptom psychosis, has also been shown to produce delusions that have a kind of terroir. It’s not just that environmental factors contribute to the onset or severity of illness, though this is also true: people are more likely to develop schizophrenia if they were raised in an urban area; its onset is more likely to occur earlier the closer to the equator that they live. But the the narrative content of psychosis—its tasting notes, its references—seems to be largely determined by place. In West Africa and the Caribbean—two places whose cultural strata has been overwhelmingly determined by the transatlantic slave trade—a higher prevalence of delusions about persecution has been observed. In Japan, delusions are more often about slander; in parts of Southeast Asia and China, a common stressor is the loss of fertility; in majority-Christian Western European states, the content a schizophrenic persons delusions content is about religious guilt, shame, or poisoning. In Turkey, people were most likely to see goblins if they were experiencing visual hallucinations; for the the Xhosa people of South Africa, hallucinations deal with magical persecution and witchcraft. In the United States, a person’s psychosis is driven by paranoia, conspiracy, and surveillance: a person experiencing delusion here most frequently believes that they are being harassed, tracked, or conspired against by government agencies like the FBI.

These cultural reproductions are manifested through differences in space as well as time. Midcentury Americans were more likely to hallucinate about alien abduction. In 2020, there was a surge of delusions about being individually subjected to scientific experimentation; people suddenly felt like they were a rat in a lab. And, since, 2022, a new type of content begun to rise. People have begun to suspect that their A.I. chatbots or sentient, or else, like my father, that their sentience indicates that they are robots powered by A.I. For any person in a society connected to the internet, technologies powered by A.I. have become inescapable. It autocorrects our text messages, drafts our love letters, filters our spam emails, drives our cars, scribes our doctor’s visits. It makes weather forecasts and traffic predictions and new recipes. It powers Siri, social media, ride share services, streaming services, smart thermostats, robo-vacuums, the Apple watch. It is the priest shrouded behind the confessional, the confidant when we are feeling most ashamed. In its various configurations, A.I. is, increasingly, the primary relationship a person might have. (A staggering one-in-three people under 18 has a “synthetic relationship” for their primary mentor, friend, or romantic partner as of the beginning of this year.) The algorithm is the mediator between what happens inside the self, and what happens all around it. It is our lens for understanding the natural world and our place within it.

I don’t know what happened to my father after his treatment; I asked Mark once, a few months ago, and he told me he’d never thought to follow up. (Of course this left me gobsmacked, reeling with a reconsideration of my place in all of these relationships, but this is not the place I’ve chosen to hash any of that out.) Still, the revelation of his experience of digitally induced delusions has tinted everything—my relationship to the A.I. discourse, most of all.

1.7 million people express mania, suicidality, and psychosis to ChatGPT every week. Psychosis is, critically, understood to be a symptom, and not an illness unto itself. It’s like the rash that’s a sign of an allergy. There are many things that can cause it: stress or drug use, nutritional deficiency, cancer, loneliness, grief. It might be useful to think of A.I. psychosis as a natural reaction to a species having abandoned its natural habitat in lieu of one that is lonely, isolated, free of the friction of buttons, of effort, of others—of the toils of being a person in the world.

It’s a panopticon my father, and as many as 300,000 other ChatGPT users each day, found himself trapped in. To throw around such a statistic makes it seems like the experience is extreme or anomalous; it belies the fact that digitalities and its accompanying delusions are already most humans’ primary habitats. Is A.I. making some of us go mad? Perhaps, but only in the way that madness has always existed as a lens through which humans relate to and reflect the world. The term A.I. psychosis, like any other diagnostic, has at least as much to do with external circumstances as it has to do with biochemical ones.

Almost all of the peer-reviewed lit that’s been published about it focuses on it as an illness, a question of predisposition and safety guard rails. But it feels more useful to me to think of it as a question of landscape management, if only so we might apply the same analytical treatment that would be applied to any socio-ecological habitat: What kinds of creatures can flourish here, and which ones risk endangerment or extinction? What environmental conditions need to be protected, monitored or mitigated to ensure an ecological community’s wellbeing? Is this a place where diverse forms of life can survive? Do we like it here? Is this the place we all want to call our home?

Because virtual has become our primary habitat. We are creatures living inside of it.

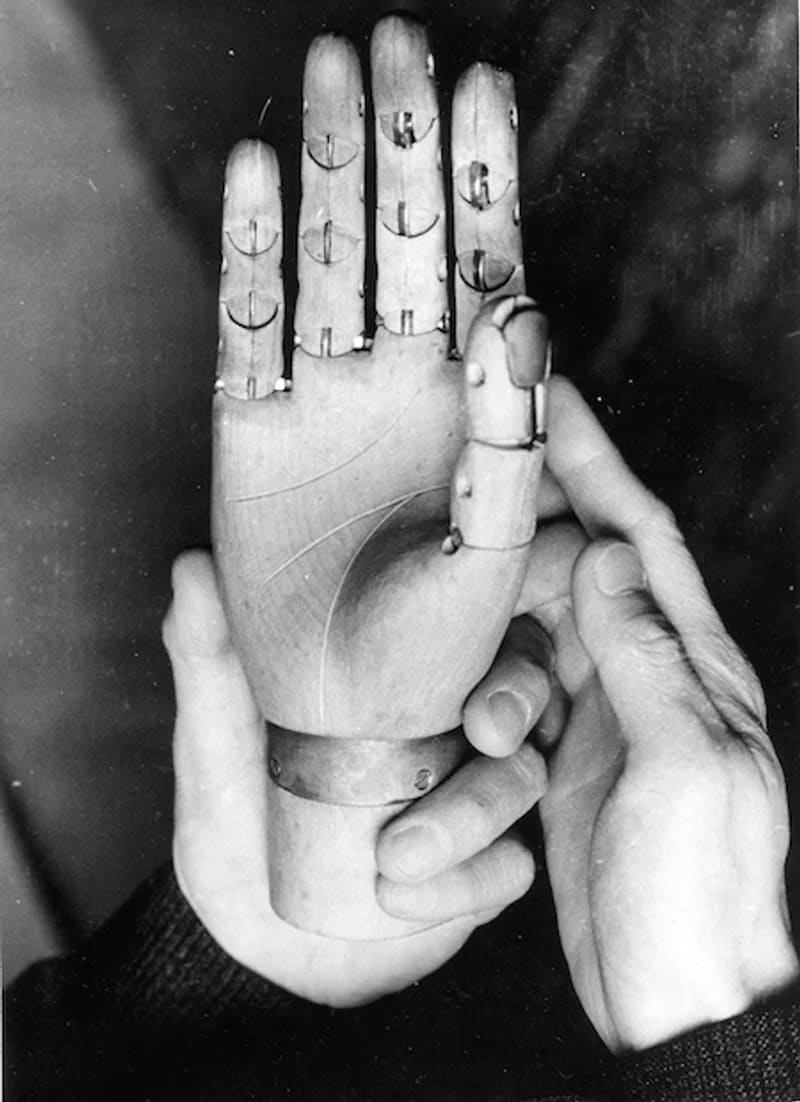

Looked at from this angle, another way to write about my father’s psychosis might go like this: D.S.W. was a sick man who had wandered into a new habitat. He was alone, and possibly frightened. He had become the ghost inside of the machine.

xoxo,

Astra

P.S. Want to go offline for a couple days? There’s still room in the wilderness writing workshop I’m co-teaching this fall. You can learn more here.